This task was performed in METRICS HEART-MET Competition at Cobot Maker Space, University of Nottingham, United Kingdom.

This functionality benchmark assesses the robot’s capability of recognizing gestures performed by a human. The robot is placed in front of a human who performs a gesture. The robot needs to recognize the gesture being performed by the human.

Project Description:

The gestures (dependent variable) will be chosen from a list consisting of:

- Head gestures: The head gestures are detected using the Haarcascade Classifier haarcascade_frontalface_alt.xml to identify the face and then detect the movements in the x and y direction relative to the center of the face. The following head gestures are detected in this project.

- Nodding

- Shaking head

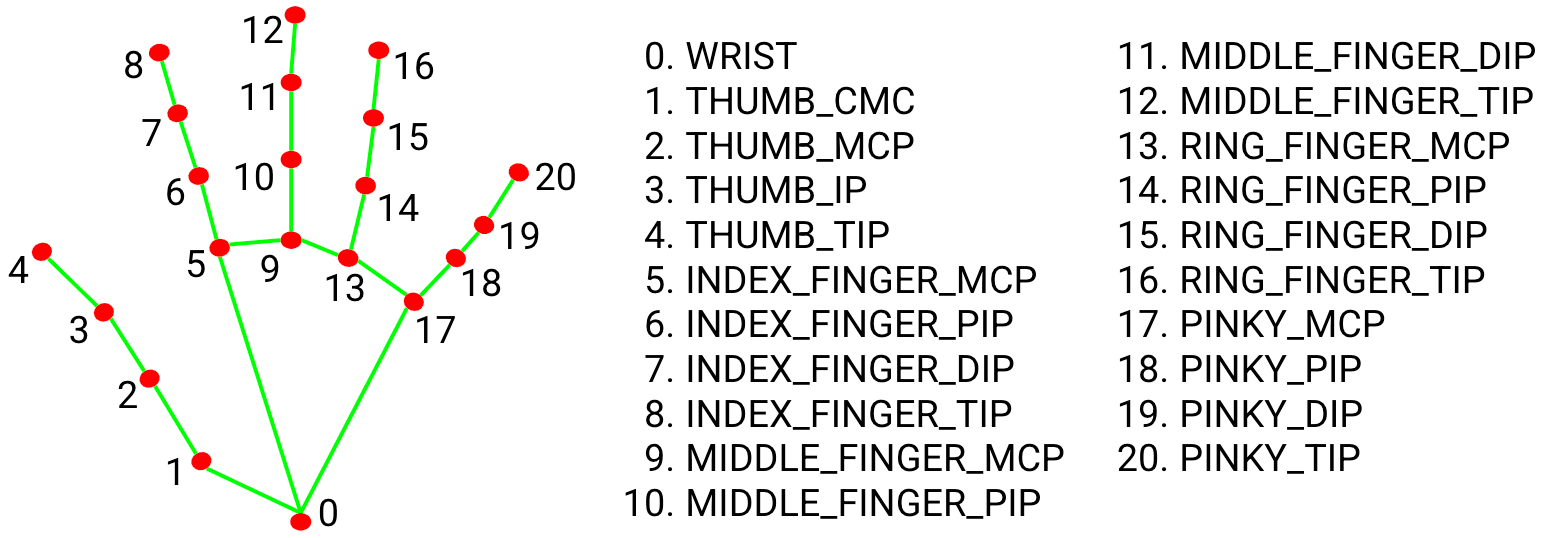

- Hand gestures: The hand gestures are detected using the Mediapipe Hands to detect the 21 3D landmarks of a hand as shown in the figure below. The following hand gestures are detected in this project.

- Stop sign

- Thumb down

- Thumbs up

- Waving

Our team secured First place in the competition and the details can be found here.

Github repository: The repository can be found here